It came to a point when I switched to the Visual Studio Code because I wanted a more integrated experience. And I quite liked it! Mainly it’s because its Vim emulation is the best across all the editors including Atom, Sublime and JetBrains products. This is very important to me because I strongly believe that Vim editing language is superior to anything else.

So I’ve used the VS code with Vim mode (of course) for a while but from time to time I missed some Vim features like flexible splits.

And so I decided to revamp my Vim setup. But this time I made it differently.

I introspected my workflow and tuned Vim to the way I work. Not the other way around where you change your habits to work around editor setup. And I encourage you to do this yourself regardless of your editor.

Disclaimer: My setup may seem wrong to you but that’s because it’s tailored to my needs. Don’t blindly copy-paste my config – read the help, think and make it yours.

Here is the quick outline of what I did:

- Started by installing Vim the sane way

- Learned to use Vim help

- Learned core Vim features that I’ve missed

- Adjusted Vim to my workflow

1. Installing Vim the sane way

Let’s do this one quick – I use Neovim. I think it’s the best thing happened to the Vim community in the last decade. I like the project philosophy and that it rattled up Vim and now Vim 8.0 has adopted ideas from Neovim like async job control and terminal.

To install Neovim I recommend using AppImage. You just download the single file and run it. No libs, no containers, nothing. It also allows me to run the latest version hassle free. I’ve never used appimage before and thought that it would distribute as some kind of container image but it’s actually a good old binary:

$ file nvim.appimage

nvim.appimage: ELF 64-bit LSB executable, x86-64, version 1 (SYSV), dynamically linked, interpreter /lib64/ld-linux-x86-64.so.2, for GNU/Linux 2.6.18, stripped

After installing Neovim you should really run :checkhealth and fix top issues

– install the clipboard and python provider.

Next, read the help for Neovim setup – :h nvim-from-vim. I’m doing it simple,

just put this

set runtimepath^=~/.vim runtimepath+=~/.vim/after

let &packpath = &runtimepath

source ~/.vimrc

to the .config/nvim/init.vim and use the ~/.vimrc for the configuration.

After that, let’s start digging into it.

What this gives you is the latest version of Neovim that’s not conflicting with anything and compatible with Vim.

2. Use Vim help

IMO, Vim help is the most underestimated feature of Vim. I haven’t used it until this revamp and, boy, what I’ve missed! So many useless searching, reading silly blogs and StackOverflow could be avoided if I’ve used the help system.

Vim help consists of 3.7 megabytes of text, half a million of words

$ wc neovim-0.3.4/runtime/doc/* | tail -n1

90804 543942 3592651 total

Also, almost every plugin you install has its own help so these numbers are not final.

Vim help topics are comprehensive, detailed and cross-referenced. You may be overwhelmed at first because there is a lot of information here. But don’t be discouraged – it’s much much more efficient and useful to read and grasp comprehensive help topic than mindlessly searching for blog posts or StackOverflow. If you could only learn one thing from this post – please, learn to love the Vim help system.

Some tips that helped me.

:h pattthen TAB to find help on the subject starting with patt:h pattthen Ctrl-D to find help on the subject containing patt- Vim help system is full of cross-references – you can jump back and forward just like with code by using Ctrl-] and Ctrl-T.

Or even better – read the :help help which is help on help!

Let’s look at the example, if you type :h word-m Vim will open help on word

motions:

==============================================================================

4. Word motions *word-motions*

<S-Right> or *<S-Right>* *w*

w [count] words forward. |exclusive| motion.

<C-Right> or *<C-Right>* *W*

W [count] WORDS forward. |exclusive| motion.

...

Here you can see the header Word motions, its tag word-motions that is used

as a subject for :h command.

Next, you see the help itself describing word motions.

Note that there are some words that have some funky symbols around them or shown

in different colors. Anything that doesn’t look like the plain text is a help

topic by itself – you can jump into it by Ctrl-]. So in this example, we could

find what is [count] or what is |exclusive| motion. And that’s enough for

efficient using of Vim help.

Here are the things that I’ve found in Vim help:

- I’ve configured statusline with the help of

:h statusline. All the blog posts were just a waste of time. :h ins-completiondescribes comprehensive builtin completion system. Now, I’m using Ctrl-X Ctrl-F to complete filenames in the current directory (useful to insert links in Markdown files). Also, whole line completion with Ctrl-X Ctrl-L is useful for editing data files.:h window-movingtaught me that you can move splits around, e.g. Ctrl-w H will move current window to the left (it will also convert vertical split to horizontal). Also, the whole:h windows.txtis amazing.

Finally, I recommend to everyone familiar with Vim to review :h quickref from time to time.

3. Use missed core features

After I’ve learned to use Vim help I started to discover things that I’ve missed but that was always there.

Remember to check the help for each thing in this list – I’ve conveniently supplied Vim help command and a link to online help.

Auto commands

Auto commands allow you to tune Vim behavior based on filename or filetype. Basically, it executes Vim commands on events.

I use it to set correct filetype for some exotic files like this

autocmd BufRead,BufNewFile *.pp setfiletype ruby

autocmd BufRead,BufNewFile alert.rules setfiletype yaml

Or to tune settings for particular filetype like this

autocmd FileType yaml set tabstop=2 shiftwidth=2

Other editors required me to install full-blown extensions like Puppet extension or YAML extension but with Vim I keep things simple and lightweight.

Persistent undo

This feature is so awesome yet none of the other editors have it.

It sounds simple – when you exit Vim your edit history is saved so you can open the file again 2 days later and undo the changes.

Edit history is an important part of your context so I think once you get used to it you couldn’t use any other editor without this feature.

To enable persistent undo I’ve done this:

set undodir=~/.vim/undodir

set undofile

Bliss!

Clipboard

This one is actually more of a hard fix than a feature.

Clipboard in Linux is a complicated story. All these buffers and selections don’t make things understandable. And Vim makes it even more complicated with its registers.

For years I had these mappings

" C-c and C-v - Copy/Paste to global clipboard

vmap <C-c> "+yi

imap <C-v> <esc>"+gpi

that makes Ctrl-c and Ctrl-v work.

But why use two-key combos when you can use a simple y and p for copying and

pasting?

Turns out, you can make it work very nice by using this single setting:

set clipboard+=unnamed

It makes y and p copy and paste to the “global” buffer that is used by other

apps like the browser.

Mappings

What I like the most about Vim is that its normal mode allows you to use all keys for a command while others require to use some key combo based on modifier (Ctrl-o, Ctrl-s).

When you can use any key for a command it’s natural to use a single key

shortcuts, e.g. p to paste the text.

And what is even more awesome is that you can map a key or a sequence of keys at your own will.

Here are my most used mappings:

nnoremap ; :Buffers<CR>

nnoremap f :Files<CR>

nnoremap T :Tags<CR>

nnoremap t :BTags<CR>

nnoremap s :Ag<CR>

NOTE: these mappings override default Vim motions and actions because I don’t use them. It may be better for you to map it via leader key. Anyway, read the help on what these letters do by default and decide whether you want to override them.

These mappings invoke fzf command (more on this later) using a single

key.

If I need to go to some function I just press t and got the list of tags of

the current file. Not Ctrl-t, not Shift-t, just t. Combined with fzf

fuzzy find it’s very powerful.

True colors in Vim

For years I’ve been using Vim in a terminal without knowing that I’ve been using 8-bit colorscheme. And it was actually ok because 256 colors is kinda enough.

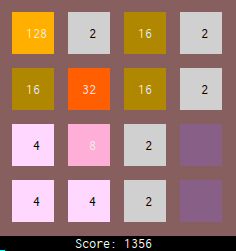

It’s worth noting that I’m using my own colorscheme called tile. While tuning some of the colors I didn’t understand why I don’t see the difference and then I’ve read the help on syntax highlighting and realized that I want true colors in Vim.

Also, most of the colorschemes that you see in the wild, e.g. on https://vimcolors.com/ are presented in the 24-bit colors. So you’ll be disappointed when you don’t see the same colors when you install the colorscheme in your Vim.

Also also, your terminal is almost certainly capable of displaying in True Color so why limit yourself to the 256?

It’s all boils down to the simple set termguicolors in your vimrc. This

options simply enable true color for Vim. Here is the difference with my

colorscheme:

Search history

The last one is quick but so great that I even tweeted about it:

4. Tuning Vim to my workflow

All of the things above already boosted my productivity but Vim can do even better when you know what you want.

In my case, here was the list:

- Working with projects (sessions)

- Autocompletion

- Quick file find by

fzf - Quick search in files via

ag(the_silver_searcher) - Tag jumping using ctags index

- Find usages via cscope index

- Git integration (spoiler: no Fugitive)

- Linter integration

- Build integration

- Various niceties

So let’s dive in.

Working with projects

For me working with projects is about saving context – Open files, layout, cursor positions, settings, etc.

Vim has sessions (:help session) that does all that.

To save a session you have to :mksession! (or short :mks!) and then to load

session start it with vim -S Session.vim. It may be enough for you but I found

it kinda cumbersome to use as is.

First thing I’ve tried was to automate saving session. I’ve tried nice and

simple obsession plugin that does just

that. For the loading part, I’ve created bash alias alias vims='vim -S Session.vim'.

This was OK but a few things were annoying. The way I work is like this: I have

multiple projects that are kept in separate directories as separate git repos.

If I want to do something I cd into that dir, open the file, edit it or just

view, and then do something else.

When I was opening a file with Vim inside a directory session wasn’t applied, so

I had to manually :source it. After doing this for a week it was obvious that

it’s not the way I wanted.

And then I’ve found an amazing vim-workspace plugin that does

exactly what I need. It creates a session when you :ToggleWorkspace and keeps

it updated. Then when you open any file in the workspace it automatically loads

the session.

It also has very nice command :CloseHiddenBuffers that, well, closes hidden

buffers. It’s very useful because during session lifetime you open files and Vim

keeps them open. With this single command you can leave only the current buffer.

So I settled on the vim-workspace and found peace.

Autocompletion

Since the last time I’ve done Vim configuration, which was around 2008, a lot of things changes. But the most exploded sphere in Vim, from my point of view, was autocompletion support in Vim.

Vim gained sophisticated completion engine (:h ins-completion)

with the omni-competion that gave birth to the whole load of plugins.

YouCompleteMe, OmniCppComplete,

neocomplcache/neocomplete/deoplete, AutoComplPop, clang_complete, …

It is complicated and I was exhausted while researching on this topic, so here is the shortest possible guide on completion plugins:

- YouCompleteMe – very powerful but huge plugin (>200 MB installed). Works as a client-server, requires a lot of utils.

- VimCompletesMe – a wrapper around Vim’s built-in completion hence super lightweight.

- Deoplete – current completion plugin by Shougo (previous were neocomplete and neocomplcache). Works as a client-server, much more lightweight than YouCompleteMe, can complete from a diverse set of sources.

- Other plugins are usually specific for concrete language.

My choice is deoplete because it’s fast, versatile, and not heavy. If you want to keep things native, then I’d recommend using VimCompletesMe. I’ve tried to use YouCompleteMe, had some troubles with installation, gave it 250 MB and it just showed me the function names without signatures and argument names. So I was disappointed and switched to deoplete that provides more info.

For the Deoplete I’ve added a few completion sources:

- Shougo/neco-syntax for generic syntax completion

- ujihisa/neco-look for dictionary completion – useful for writing blog posts.

- Shougo/deoplete-clangx for C/C++ completion

- deoplete-plugins/deoplete-go for Go completion

- deoplete-plugins/deoplete-jedi for Python completion

There is also tmux-complete that can complete from other tmux panes. Like view logs in one pane and Vim in the other pane can complete the values from it! It works but I don’t use tmux much.

There is also webcomplete completion source that completes from the currently open web page in Chrome. Alas, it works only on macos. There is an open discussion about adding support for Chrome on Linux.

Quick file find

The ability to quickly open file is crucial to my productivity. And I need to

open a file by partial name. As an example, suppose I’m working in some ansible

repo. I know that I have a template file for setting environment vars. I don’t

remember exactly the full path but I know that it has env in it.

So I use fzf to sift through the list of file in the project that is generated

by ag -l. Here is how it works live:

There are other plugins that do that like

CtrlP but I use fzf for other things

– list of buffers (open files), search, git commits, list of tags, history of

search and history of command. Anything that should be sifted through is piped

to the fzf because it does this job really well.

File find is launched with a single letter command f in the normal mode.

Quick search in files

Before this revamp I’ve used builtin / Vim command to search in the current

buffer and :Ag to search in the files. I really like ag – it’s fast and

very handy.

After I’ve embarked on the fzf I hooked Ag output to it and now it works even

better:

File search is launched with a single letter command s in the normal mode.

Find usages

This was my long wished dream – when I stumble on some function I want to see its callers. Sounds simple but it’s a difficult task. The only thing that can do it and that is not tied to an IDE is cscope.

But cscope is a, how to say nice, weird thing. It requires you to build its own database by supplying a list of files and then provides tui interface to interact with. Its documentation doesn’t help much and it feels that nobody uses it.

This idiosyncratic cscope workflow was the main reason why I occasionally opted for other editors and IDEs. Just to see if they have “find usages” implemented well.

But this time I said to myself – you have to make it work. And here is what I did.

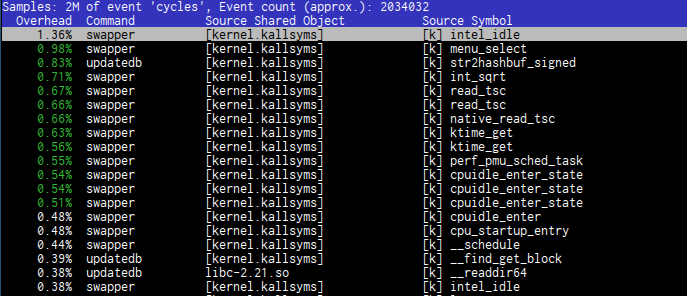

First, I started with automatically generating cscope database. I use vim-gutentags for this – it generates ctags index and cscope database on file save.

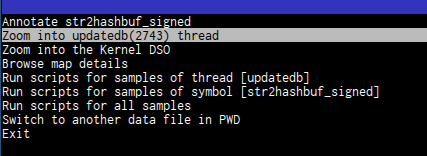

Then to integrate cscope I’ve tried different things:

- Tried to use CCTree but it builds its own cscope database and fails with some strange errors I don’t want to touch. So ditch it.

- Tried various cscope plugins – everything is just remapping of builtin cscope functions. No fzf support

- Finally settled on this thing based on https://gist.github.com/amitab/cd051f1ea23c588109c6cfcb7d1d5776

" cscope

function! Cscope(option, query)

let color = '{ x = $1; $1 = ""; z = $3; $3 = ""; printf "\033[34m%s\033[0m:\033[31m%s\033[0m\011\033[37m%s\033[0m\n", x,z,$0; }'

let opts = {

\ 'source': "cscope -dL" . a:option . " " . a:query . " | awk '" . color . "'",

\ 'options': ['--ansi', '--prompt', '> ',

\ '--multi', '--bind', 'alt-a:select-all,alt-d:deselect-all',

\ '--color', 'fg:188,fg+:222,bg+:#3a3a3a,hl+:104'],

\ 'down': '40%'

\ }

function! opts.sink(lines)

let data = split(a:lines)

let file = split(data[0], ":")

execute 'e ' . '+' . file[1] . ' ' . file[0]

endfunction

call fzf#run(opts)

endfunction

" Invoke command. 'g' is for call graph, kinda.

nnoremap <silent> <Leader>g :call Cscope('3', expand('<cword>'))<CR>

What it does is call cscope and feed its output to fzf. '3' is the field

number in cscope TUI interface (yeah, you read it correct, :facepalm:)

corresponding to Find functions calling this function.

This thing works – I pasted it to my vimrc and invoke it via <Leader>g but it

needs to be packaged as a plugin. Maybe I’ll do this sometime.

Overall cscope feels like fucking dirt but we don’t have anything better.

Git integration

I’ve got used to console interface of git because it’s stable, independent of any editor and it provides all features of git because it’s the main interface. And I’m very comfortable with this way of working with git.

So my requirements for Git was pretty little – actually, I wanted to explore how this integration could help my workflow.

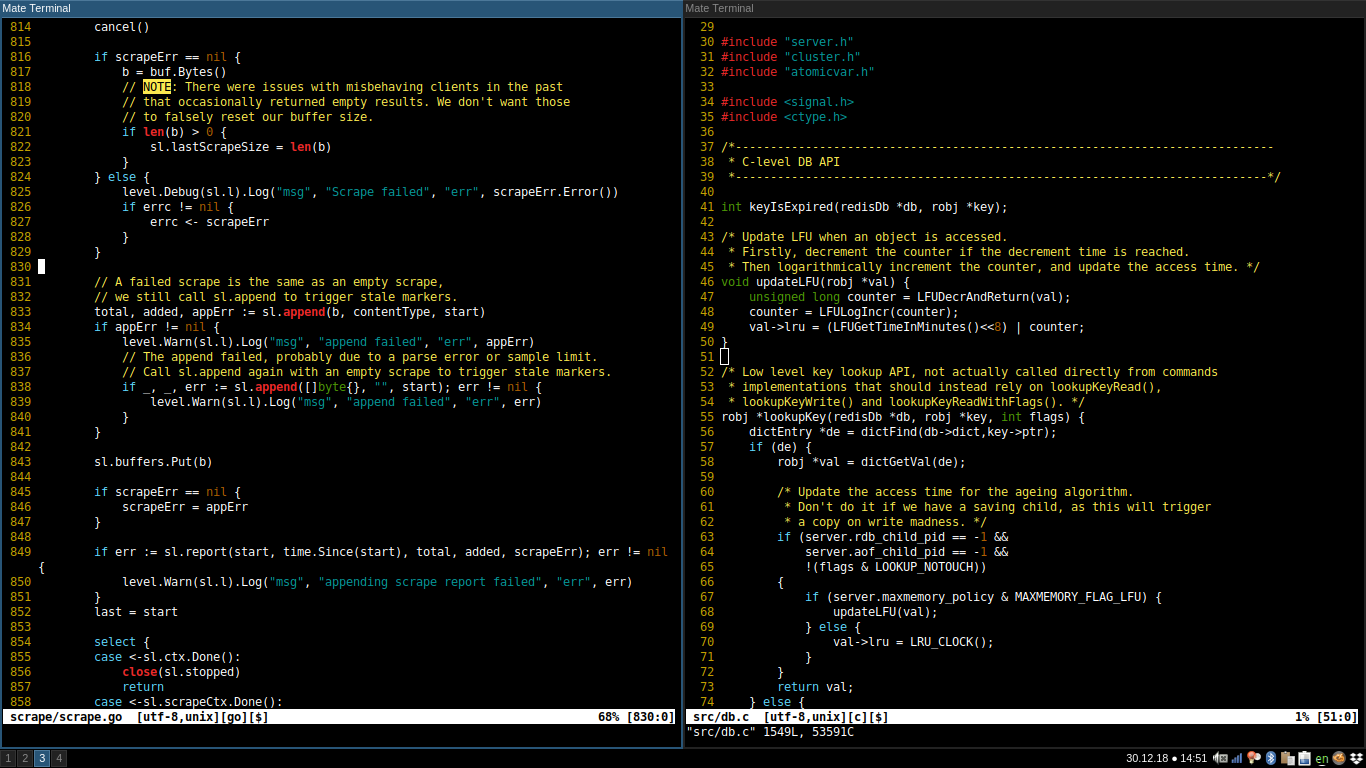

First, I’ve tried fugitive but quickly found that it was not for me. It was not suitable for my workflow. The main problem is that it messes my windows layout by opening its own buffers with git output:

- When I invoke

:GstatusI want to see the changes, so I invoke:Gdiff. It opens the diff in the closest window replacing buffer I was editing. That’s OK but when I’m done with the diff I want to close diff and return to the previous buffer. And this is where it gets complicated – diff is a 2 window, so I have to return with Ctrl-o to the previous buffer in one window and then kill the other buffer with :bd. This is really not convenient. :Glogjust spits git log output in messages.:Gblameshows the standard git blame output and that’s OK. When I try to view commit from blame it opens it in the current window, again messing with my layout, and scrolls the commit to the diff of the chosen lines. This is not what I want, I want to view the commit message and other related changes. The scrolled part is what I already saw when I was doing blame.

So I’ve ditched it and settled on vim-gitgutter because it’s nice and doesn’t interfere with my workflow. This plugin shows line status in the gutter. And it provides a motion for next/previous hunk.

Then I’ve tried to use vimagit and it’s great! This is what I really want for Git integration – a convenient staging of changes and writing commit message. Vimagit gives me a buffer with unstaged and staged diffs and a commit message section and simple to use mappings. Really great!

Finally, I’ve found git-messenger that shows blame info (with history) in the floating window.

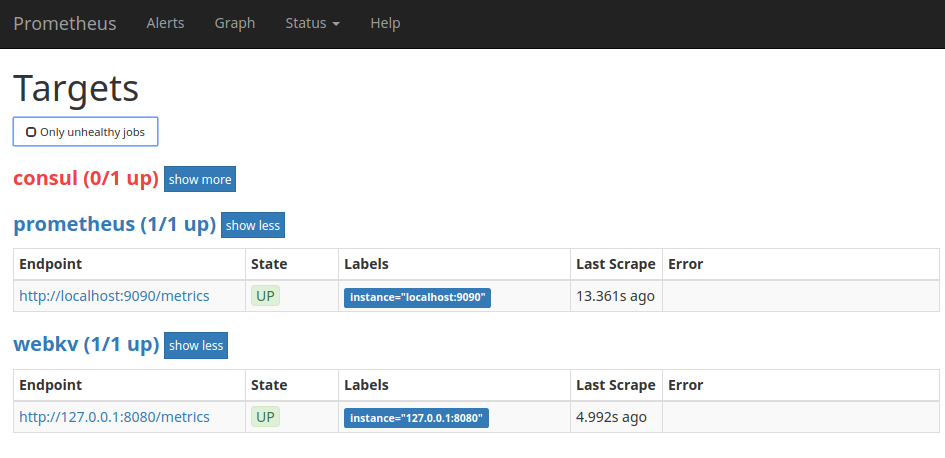

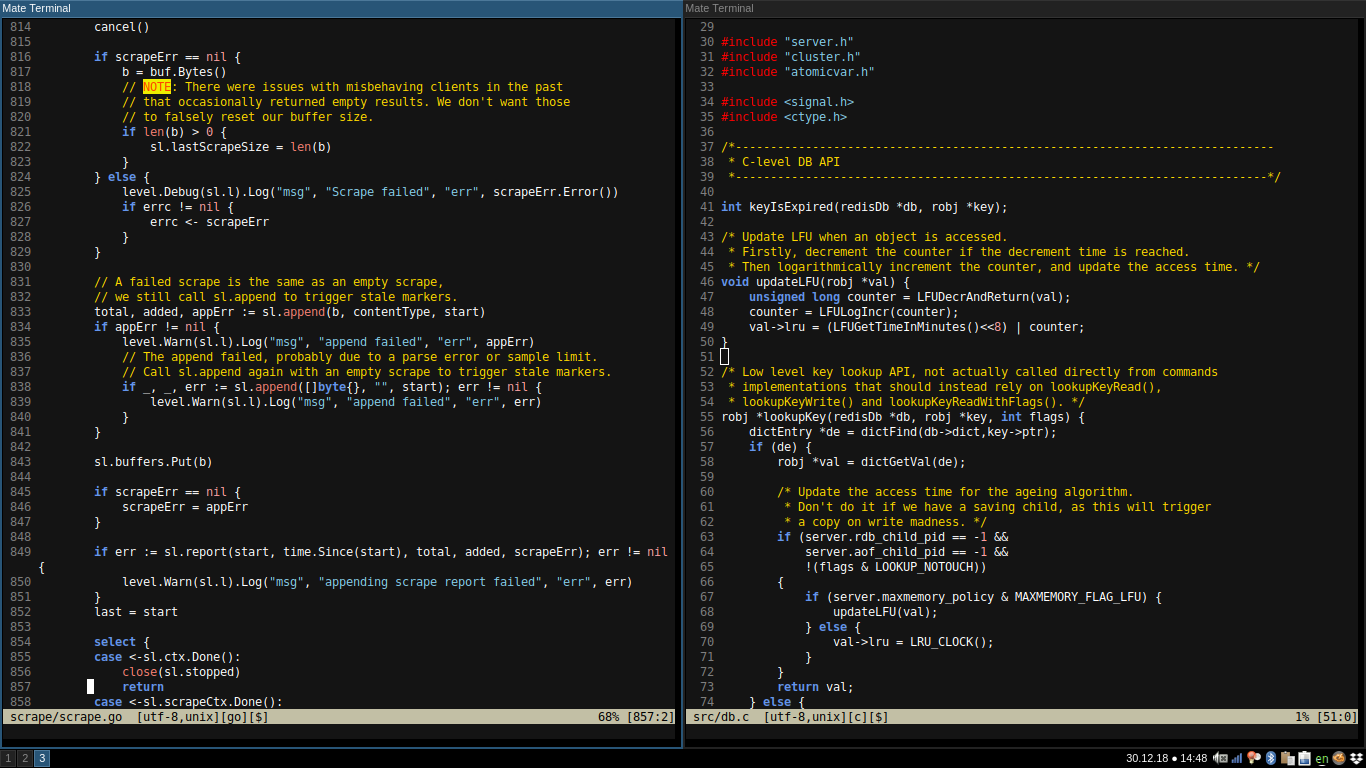

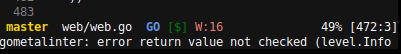

Build and linter integration

Similar to Git this wasn’t a hard requirement because I’m doing building and linting from the shell or automatically in CI. But, again, I wanted to explore what could be done here.

I setup Neomake as a linting engine. It has a pre-configured list

of linters depending on filetype. I’ve configured it to run on only on buffer

write (it can be launched at an interval, at reading, etc.) to avoid useless

work. The count of warnings and errors of neomake run is shown in the in

statusline (see screenshot below ). And the results of linting can be viewed in

location list – :lopen, :lnext, :lprev.

Also, Neomake can invoke make program (:help makeprg)

without blocking the UI so I’ve added this mapping and that’s it:

nnoremap <leader>m :Neomake!<cr>

The results of build are in the QuickFix list (:help quickfix).

Various niceties

ZoomWinTab

This plugin is a godsend for me. I use splits a lot and

sometimes I want to temporary zoom the current window. With this plugin, I just

do <Ctrl-w>z to toggle the zoom. This is similar to the tmux

zoom feature.

Sensible

vim-sensible provides sensible defaults like enabling filetype, autoread, statusline. But most important for me was this line

set formatoptions+=j " Delete comment character when joining commented lines

Commentary

Commentary plugin adds actions to quickly comment line, selection or pretty much any motion.

Surround

Surround plugin allows me to easily add, change or delete “surroundings”. For

example, I often use it to add quotes to the word with ysw" (I have a

mapping for that) and change single quotes to double quotes

with cs'".

Conclusion

So here I am, happily living with Vim for about 3 months now. I’ve intentionally waited from posting this to prove myself that my new setup is worth it. And, gosh, it is!

The main boost was getting comfortable with reading Vim help. Yes, I’m trying again to convince you about reading it because it makes you reason about what you do correctly.

And the key point is to tune Vim into your workflow, not the other way around.

Also, I’m tweaking things as I keep finding new ways to make my life in the

editor more pleasant. The recent one was set hidden (:h hidden) to

prevent nagging 'No write since last change' message when switching buffers.

There is no magic here in Vim when you put some conscientious effort and try to do things your way.

That’s it for now, till the next time!

]]>